-

What We Do

-

AI NavigatorGain clear direction and momentum as your chart your organization’s AI path.

-

AI FoundationsEstablish the essential skills, systems, and mindset to support sustainable AI adoption.

-

Agentic AI LabExplore, prototype, and refine agent-driven solutions to accelerate real-world impact.

-

GovLabAdvance government innovation with practice AI solutions tailored to unique public sector needs.

-

-

Featured

The AI Agent Advantage: Understanding Your Digital Workforce

The AI Agent Advantage: Understanding Your Digital Workforce

-

Some Industries We Support

-

Featured

Building Canadian Communities with Homegrown AI

Building Canadian Communities with Homegrown AI

-

Services

-

What We Do

-

AI NavigatorGain clear direction and momentum as your chart your organization’s AI path.

-

AI FoundationsEstablish the essential skills, systems, and mindset to support sustainable AI adoption.

-

Agentic AI LabExplore, prototype, and refine agent-driven solutions to accelerate real-world impact.

-

GovLabAdvance government innovation with practice AI solutions tailored to unique public sector needs.

-

-

-

Industries

- Insights

-

About

Insights

Year in Review with AltaML Co-CEO Nicole Janssen

As we approach the end of 2023, Nicole Janssen, Co-Founder and Co-CEO of AltaML, sat down for a year-end interview to reflect on some of the most pivotal moments for the tech sector. With the future of Canada at the forefront of her mind, Janssen reiterates the country’s potential to capture the title of a global leader in responsible artificial intelligence (AI), a title she says is ours to lose. While ethical AI and regulations have been a major talking point globally, part of the conversation stemmed from the surge of interest surrounding generative AI (GenAI) and the hype around ChatGPT. With no signs of AI slowing down, Janssen provides some advice on how to move forward in an AI-driven environment, aiming not only to promote higher-value work and education but also to encourage higher adoption rates.

Looking back on the past year, what were some of the most significant changes to the tech space?

The release of ChatGPT in November 2022 significantly influenced the tech landscape this year. Organizations, businesses, and individuals started to pivot and question how to apply this technology and the impact it could have personally and professionally. Naturally there was a noticeable surge in people seeking information about what AI and GenAI is so they could begin forming their own opinions.

When technology moves as fast as AI, it’s understandable that people are hesitant about its capabilities and the benefits it can provide society. People want to distinguish between what they see in the media, discerning the truth from sensationalized content. This prompted questions about regulation and the importance of ethical AI—a major talking point as we round out the end of 2023 and one we will carry well into the new year. While there was hesitation initially, I’ve noticed a shift toward opening up those conversations with people who were hesitant. Bad actors are inevitable; what we do to deter them will have the biggest impact.

Supporting information:

- In just two months, ChatGPT reached 100 million users, becoming the fastest-growing consumer app in internet history.

- Job postings on LinkedIn that mention AI or GenAI more than doubled globally between July 2021 and July 2023.

- Geoffrey Hinton, Yoshua Bengio Warn “Risk of Extinction from AI” in Public Letter.

As we look ahead, what emerging technologies should businesses keep a close eye on for 2024? Why do you believe these will be notable?

The challenge with this question is that there will always be new technologies that catch and divert our attention. The fact is that the existing AI technology we already have needs to be more utilized at this point. I suggest leaning into figuring out how we can best use that and stop looking to what’s the next best thing.

What are your predictions for the tech space in 2024? How might these developments shape the industry and broader society?

In 2024, the ethical AI conversation will continue to grow. The anticipation is for a more focused discussion on establishing best practices and how we can implement them more broadly, even with this being such a new field. The trouble right now is that we’re simply talking about those worst-case scenarios—we have to move beyond that narrative to start seeing progress. AI legislation and regulations will move forward globally. The conversation is happening in a collaborative format across different countries and regions, and that’s incredibly important. Several countries are starting to develop legislation, and we’ll continue to see those develop and take shape.

An optimistic outlook for 2024 includes an upswing in adoption rates. Overall adoption rates are still relatively low, which makes it difficult for organizations to get behind investing in AI. As a society, we’re becoming a lot more open to the idea of AI and being ready to adopt the potential that comes with it—I believe that people just don’t realize yet how ingrained AI already is in our everyday lives.

Quick fact:

- Right now, 77% of our devices have one type of AI or more.

What’s the best way forward to start encouraging higher levels of adoption?

The biggest barrier to AI adoption is fear. And the only way around fear is through education. Not only does education need to happen at the board and executive level to understand how to manage bringing AI into an organization, but it also needs to get down to the end-users. This is so that people understand how this will impact their lives, how it will affect their jobs, how they can perform best alongside it, and how they can feel that they’re being upskilled to be able to grow along with the implementation of AI so that they aren’t left behind.

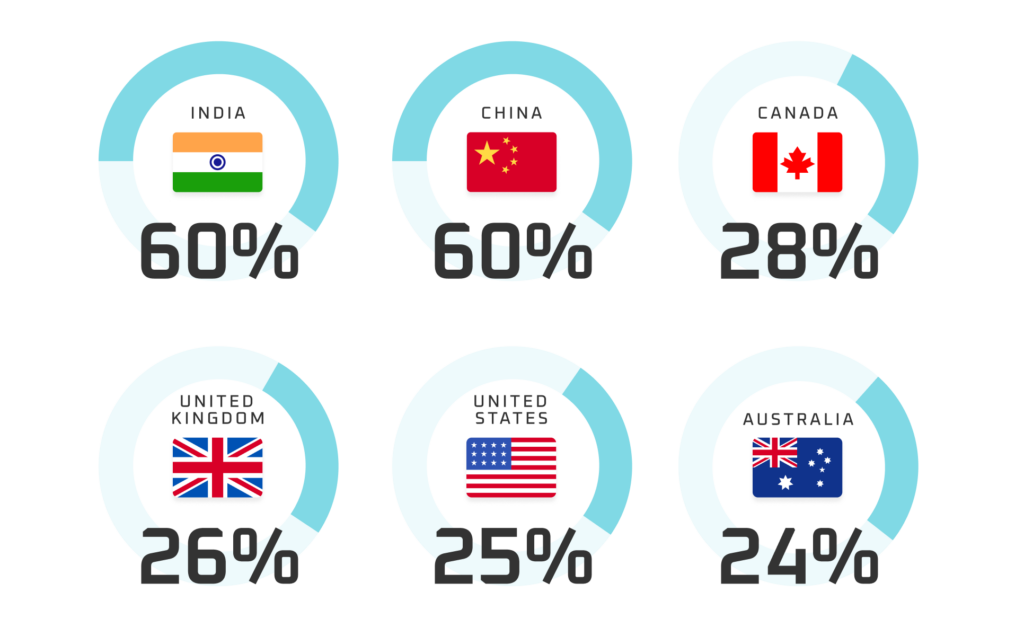

Adoption rate by country:

Source: TechReport

By the end of 2024, where do you think we’ll be globally regarding responsible AI and its development?

I see Canada coming forward with regulations similar to those of the EU. I also see the U.S., with Biden’s executive order that was recently released, seeming to align a little more closely with the UK. I believe they’re hoping that, while certain countries might be more aligned with others, there is some level of consistency in AI globally and that regulations are approached collaboratively. It’s too challenging to try to operate in the AI space as a company if you’re trying to comply with very different regulations in different jurisdictions. So, if regulations are incredibly different and onerous, the potential for AI will be squashed. We’ll likely see clarity on global responsible AI expectations in 2024.

What advice would you like to impart to people heading into the new year?

Number one is that AI has a ton of potential, but there are also risks that we all have to be conscious of. However, we’ve seen that the risks have overshadowed the potential in a lot of cases. I want people to educate themselves so that they can make educated decisions around AI and not let the worst-case scenarios paralyze them from moving forward.

My other advice is to start small. When integrating AI into an organization, it’s really easy to look at those flashy, big use cases with a ton of potential return on them. Still, those typically take a lot of time and usually require a significant amount of change management. Often, it becomes the first use case that an organization starts with, and it never comes to fruition because of the amount of change management needed. Something that supports that change management is getting a few small wins, getting people excited about the potential of AI, and seeing how much it can benefit them in their jobs. See the benefit it brings to the organization, and then you can start looking at those larger, more meaty use cases.

Final Thoughts?

As a society, we must avoid fixating on alarmist messaging surrounding AI and instead focus on education. The more we stall or attempt to hold back progress, the further we fall behind. In 2024, we must help guide policymakers in making responsible and informed decisions regarding the development of AI technologies. Becoming the global leader in responsible AI presents the most significant opportunity for our country in our lifetime. If we don’t grab hold of this opportunity now, we will not compete. Our main goal should be to avoid stifling innovation while maintaining global relevance.